Natural Language Generation (NLG) | Data-to-Text Automation | Epic Product Descriptions | Auto-Updating Copy | Automated Texts + Product Descriptions | GPT + RPA + DeepL

What Does Content Automation Cost? A Comparison between GPT and Data-to-Text

Reading Time 19 mins | April 11, 2023 | Written by: Anne Geyer

Automation with GPT (Generative Pre-trained Transformer) and Data-to-Text softwares has revolutionized the content creation process. Companies of all sizes are now able to quickly create text for a variety of requirements, from product descriptions to internal communications.

But when is it worth using GPT tools, and when is it worth automating content with Data-to-Text software? This is an important consideration for companies in order to save time and money when creating content and to optimize processes in a sustainable and efficient way.

In the following, we use sample calculations based on real figures from our customers to show when it is worthwhile to use GPT or Data-to-Text software. To make our calculations easier to understand, we begin by briefly describing the differences between the two content creation technologies, GPT and Data-to-Text.

Table of Content:

1. How Do GPT and Data-to-Text Work and How Do They Differ?

1.1 Data-to-Text: What Is It and How Does It Work?

1.2 GPT: What Is It and How Does It Work?

2. GPT vs. Data-to-Text: 3 Use Cases

3. What Is the Demand for Words per Month?

3.1 GPT Models: Cost and Time by Use Case

3.2 Data-to-Text: Cost and Time by Use Case

- Small Use Case (Approx. 130 Product Descriptions)

- Medium-Sized Use Case (Approx. 5,500 Product Descriptions)

- Customized Use Case (More Than 400,000 Product Descriptions)

4. Timeliness, Quality Assurance, and Multilingualism

1. How Do GPT and Data-to-Text Work and How Do They Differ?

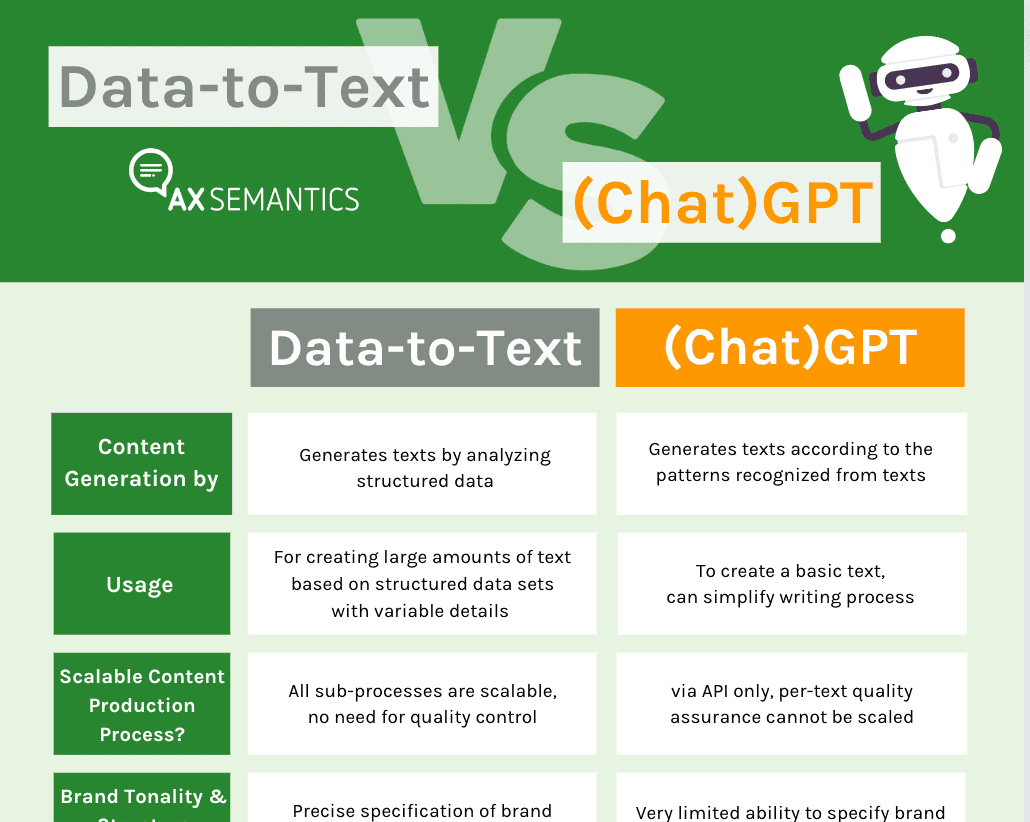

Both GPT and Data-to-Text are technologies for generating natural language texts ("Natural Language Generation" or "NLG" for short). At first glance, the two language models seem very similar, but they work in different ways.

1.1 Data-to-Text: What Is It and How Does It Work?

Data-to-Text refers to the automated production of natural language texts based on structured data as well as user-defined rules and specifications, such as tonality and structure. Structured data are properties that are available in tabular form, for instance. Examples of structured data include product properties from a PIM system or match data for a soccer game. In other words, they contain information that can be used in texts.

This means that the user has full control over the text result, can intervene in the automated content creation process at any time, and can make updates or adjustments. This ensures text consistency, meaningfulness, and quality. The texts are also personalizable and scalable, which is a great advantage when a large amount of text is required. Scalable text creation means that the tool can create hundreds of texts, e.g. on products with variable details, in just a few moments based on structured data. In addition, after an initial setup, text creation can be automated in multiple languages with consistent quality.

1.2 GPT: What Is It and How Does It Work?

GPT stands for "Generative Pre-trained Transformer". It is a language model that learns from existing text and can provide different ways to end a sentence. It has been trained with hundreds of billions of words representing a significant portion of the Internet – including the entire corpus of the English Wikipedia, countless books, and a dizzying number of web pages.

GPT creates product descriptions depending on input prompts, i.e. the information entered. The more detailed the instruction, the more accurate the output text. However, it may be necessary to optimize the prompt. In any case, quality control and possible corrections are required for the output texts.

In contrast to Data-to-Text, GPT software generates only single texts, even though this can be done rapidly and thus quickly results in a large amount of text. The user has no control over the generated content when working with GPT software. Multilingualism is possible with many GTP tools, but the same applies here: Checking the texts for correctness is essential!

How do the two technologies differ? A comparison:

2. GPT vs. Data-to-Text: 3 Use Cases

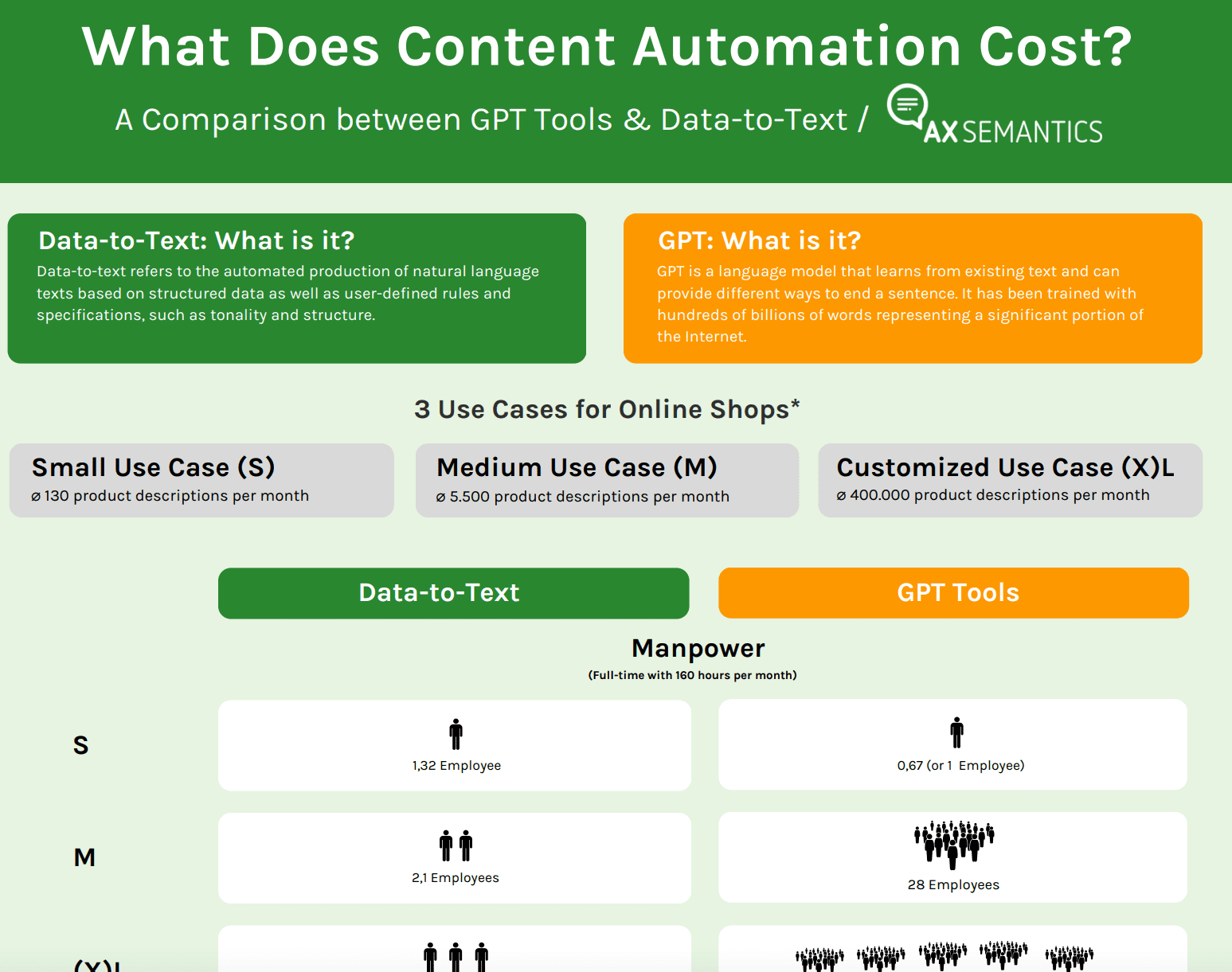

When to use which technology depends on your use case. While GPT technology is suitable for finding inspiration, or a basic framework for a continuous text, e.g. for a blog post, Data-to-Text software finds application in companies that require larger amounts of text and whose text creation involves repetitive processes due to the scaling effect.

In the following, we focus specifically on the creation of product descriptions. Here, the quantity of the required texts plays a role. Based on the customer database of AX Semantics, we make a subdivision according to company size and the corresponding text requirements.

With the classification according to the required product descriptions in:

- small use case: 130 product descriptions per month

- medium use case: 5,500 product descriptions per month

- customized use case: more than 400,000 product descriptions per month

According to our analyses, the average length of a meaningful, user-friendly product description that also meets the SEO criteria for a good ranking is 150 words. We use this number of words as a fixed point for the calculations for each use case.

3. What Is the Demand for Words per Month?

If we multiply the monthly demand for product descriptions by the average length of a product description of 150 words, we get the following results per use case:

- small use case: (150 * 130 =) 19,500 words per month

- medium use case: (150 * 5.500 =) 825,000 words per month

- custom use case: (150 * 400.000 =) 60 million words per month

Given these numbers, it's clear that manual copywriting is not affordable. Now, companies with different content needs are faced with the question of whether GPT or Data-to-Text technology is the best solution for them. The first thing to note is that the best solution is the one that bundles the least amount of resources, such as money, time and employee effort, and facilitates text maintenance, such as updates, customizations, etc.

Now let's take a look at how much time needs to be invested in text creation and text maintenance, and the costs involved for hiring employees for text creation. In terms of GPT software, we base our calculations on tools like Jasper.ai, Open.ai, Neuroflash and ChatGPT. In contrast to AX Semantics as a Data-to-Text software.

3.1 GPT Models: Cost and Time by Use Case

1. Input and Creation of Prompts

GPT software models generate texts based on input prompts. Usually several input attempts are necessary to get a suitable text. Depending on the GPT software and the chosen pricing model, the user buys a certain number of words that can be generated by the software per month. However, not all text output is usable. Thus, the user discards many words or even entire text outputs.

If we assume that a user makes about three adjustments to a product description on average, this results in three times the required number of words. In terms of our use cases, this means:

- small use case: (19,500 * 3 =) 58,800 words per month

- medium use case: (825,000 * 3 =) 2,475,000 words per month

- customized use case: (60,000,000 * 3=) 180,000,000 words per month

Such a high demand is not only unmanageable, but it also plays a role in the pricing, because the license fees for GPT tools are calculated according to "tokens", i.e. according to the demand for generated words, which turn out to be correspondingly high. Especially in the medium-sized and customer-specific use cases. However, in our analyses, these costs do not play a role due to the fluctuating prices from provider to provider and are excluded from our further calculations.

For writing a prompt and optimizing it if necessary, we have calculated only a small amount of time because we assume that the prompt is based on structured product data. Therefore, we calculate that proofreading and correcting a prompt takes 10 minutes (about 0.16 hours). Multiplied by the number of product descriptions required per month, we get the following time expenditure:

- small use case: (0.16 * 130 required product descriptions =) 21 h

- medium use case: (0.16 * 5,500 =) 880 h

- customized use case: (0.16 * 400,000 =) 64,000 h

The analysis shows that the time required for the small use case is still manageable with GPT software. In the medium use case, however, the effort is already so great that it would take far too long for companies in the fast-moving e-commerce sector to publish product descriptions. In addition, the organization of such a large amount of text and its maintenance, e.g. through updates, is no longer manageable.

2. Multilingualism

Internationally operating companies need content in many languages. Manual translation is too time consuming. GPT software is also hardly suitable, as the translation may contain incorrect information. Therefore, quality control of the content output is always necessary. But let's take a look at how much time it takes to create multilingual texts.

According to the analysis of customer data by AX Semantics, the majority of companies work with the following number of languages, depending on the use case:

- small use case: less than 10 languages

- medium use case: less than 50 languages

- customized use case: more than 30 languages

This shows that accounts that need the largest amount of text do not necessarily require content in the most languages. In fact, the medium use cases often need content in the most languages.

However, we calculate a factor of 0.5 hours for reviewing and correcting each text output in different languages. This is because there is not only a proofreading effort, but also a coordination effort. On the one hand with the translators, on the other hand with the international branches that operate in other markets and need to translate texts.

If we multiply the 0.5 hours by the number of product descriptions required, we get the following time expenditure:

- small use case: (0.5 h * 130 product descriptions =) 65 h/ month

- medium use case: (0.5 * 5,500 =) 2,750 h/ month

- customized use case: (0.5 * 400,000 =) 200,000 h/ month

Again, in the small use case, the effort still seems manageable, but in the medium and customized cases, the time required is no longer manageable.

3. Total Time Expenditure

But how much time do companies need in total for text creation with GPT software? If we add the time needed to create a prompt, the time needed to proofread a text, and the time needed to control the multilingual texts, we get the following figures:

- small use case: (21 hours + 21 hours + 65 hours =) 107 hours per month

- medium use case: (880 h 880 h + 2,750 h =) 4,510 h/ month

- customized use case: (64,000 h + 64,000 h + 200,000 h =) 328,000 h/ month

Now, assuming that the companies have full time employees working 160 hours per month each, they need to hire the following number of people to meet the content demand:

- small use case: (107 hours / 160 hours =) 0.67 (or 1 employee)

- medium use case: (4,510 h / 160 h =) 28 employees

- customized use case: (328,000 h /160 h =) 2,050 employees

4. Total Costs

But what do these numbers mean for the costs incurred in terms of content production? There are different models for companies here: Do they employ freelancers or full-time employees? Do they pay per word or a fixed hourly rate?

The profession of prompt writing, or prompt engineering, is very new and a professional earns around 300,000 US Dollar a year. For companies, this is a significant cost that should not be underestimated. In our example calculation, however, we assume a fixed hourly rate for the sake of simplification. And we set it extremely low at 30 Euros per hour per employee, resulting in a monthly cost of:

- small use case: (30 € * 107 hours =) 3,198 € per month

- medium use case: (30 € * 4,510 hours =) 135,300 € per month

- customized use case: (30 € * 328,000 hours =) 9,840,000 € per month

In addition, there is the cost of licensing the GPT tools, which we will ignore here due to price fluctuations from tool to tool. Broken down to the individual texts, this results in the price per product description:

- small use case: ( 3.198 € / 130 product descriptions =) 24,60 €

- medium use case: (135.300 € / 5.500 =) 24,60 €

- customized use case: (9.840.000 € / 400.000 =) 24,60 €

Logically, the price per product description behaves the same in all cases and only seems to get cheaper as the amount of text increases. Looking at the use cases, it becomes clear that the use of GPT tools seems to be worthwhile for small use cases, but is unsuitable for medium-sized and customized use cases, as time, personnel and cost requirements for the content team are far too high and therefore uneconomical.

3.2 Data-to-Text: Cost and Time by Use Case

Data-to-text software requires structured data. The advantage of this is that once the data is well structured and complete, the text production process runs smoothly. In addition, text production is scalable, meaning that no matter how many product descriptions the company wants to publish and how much text output it needs, the software will reliably complete the task in a very short time. There is no need for additional personnel, as the machine generates the texts based on rules. This ensures consistent quality.

AX Semantics offers different pricing plans depending on the number of product descriptions needed per month. After the initial setup, the software creates unique texts that also meet SEO requirements. But what are the costs and resources required to use AX Semantics in our three use cases?

According to our analysis, customers need about 80 hours for the one-time initial setup of their content automation project to structure the data, and another 150 hours to set up the logics and rules. In the case of multilingualism, about 14 hours per language are required for the conversion of grammatical rules. These three tasks add up to a total of 244 hours.

1. Small Use Case (Approx. 130 Product Descriptions)

For the small use case, AX Semantics offers a pricing plan of 279 € per month. With this plan, users can create automated product descriptions in a scalable way. Since the text creation is rule-based, only the initial effort for setting up structured data and the text concept is significant. This means that the total time required, as explained above, is 244 hours.

In addition, there is an average optimization time of 48.8 hours. The optimization effort here refers to the further development of the text concepts and statements as well as the personalization of the texts along the customer journey. The amount of time spent on text maintenance varies from company to company and depends on a number of factors. Our analysis is based on an average value for the optimization effort or in other words, the average time AX customers spend optimizing their texts.

As in the GPT calculation model, the total cost, calculated from 30 Euros per hour and the effort (here 244 hours), is 7,320 Euros in the small use case. Together with the base price of 279 Euros, this is a total cost of 7,599 Euros. Broken down to the cost of creating a single product description, this means a cost of 58 Euros. At the same time, 1.5 employees are entrusted with the task of content automation. The initial effort is reduced in the first few weeks until the automation project is fully set up. After that, users only carry out optimization or development work.

Due to the scaling effect, costs and personnel expenses decrease over time, as shown by the calculation of the price of a single product description over the course of the first three months:

| Month | Composition | Costs "small use case” | Personnel costs |

1st month |

(2/3 initial cost + software price) | 40 € | 1,32 |

| 2nd month | (1/3 initial cost + software price) | 32 € | 0,81 |

| 3rd month | (optimization effort + software price) | 13 € | 0,31 |

2. Medium-Sized Use Case (Approx. 5,500 Product Descriptions)

The medium-sized use case requires more product descriptions, so AX Semantics offers a higher price plan of 2,300 Euros per month. The initial effort for this use case is 366 hours to create the logic and rule sets, and the optimization effort is 91.5 hours on average.

The total cost (30 Euros hourly wage * 366 hours) is 10,980 Euros per month – with the base price, the cost is 13,280 Euros. The cost per product description is 2 Euros. The required manpower remains unchanged at 1.5 employees. This is where the benefits of scaling through automation becomes very evident. The initial setup effort is greatly reduced and eventually replaced by the optimization effort, such as quality assurance and corrections.

The scaling effect significantly reduces costs and personnel expenses in the first three months, as the calculation for the price per product description shows:

| Monat | Composition | Medium use case" costs | Personnel costs |

| 1st month | (2/3 initial expenditure + software price) | 2 € | 2,10 |

| 2nd month | (1/3 initial effort + software price) | 2 € | 1,33 |

| 3rd month | (Optimization effort + software price) | 1 € | 0,57 |

3. Customized Use Case (More Than 400,000 Product Descriptions)

The same applies to the customer-specific use case. Here, too, the number of employees remains unchanged at 1.5 employees thanks to the scaling effect. For the customized use case, AX Semantics offers a tailored pricing plan based on the company's individual requirements. The calculations are based on the text requirements of companies that need more than 400,000 product descriptions per month. The initial effort is approximately 488 hours and the optimization effort is 163 hours.

The total cost of creating product descriptions is 10,980 Euros, based on an hourly rate of 30 Euros. Added to this is the license fee of 9,000 Euros, which adds up to a total cost of 23,640 Euros. The cost per product description is about 0.05 Euros.

Admittedly, the cost of 5 cents per product description is already very low. But even in this use case, the scaling effect comes into play, as the costs and personnel expenses decrease in the first few months. This is shown in the table for the price per product description for the first three months of use:

| Monat | Composition | Cost "customized use case” | Personnel costs |

| 1st month | (2/3 initial cost + software price) | 0,05 € | 3,05 |

| 2nd month | (1/3 initial cost + software price) | 0,05 € | 2,03 |

| 3rd month | (optimization effort + software price) | 0,03 € | 1,02 |

4. Timeliness, Quality Assurance, and Multilingualism

The sample calculations show that Data-to-Text software is particularly worthwhile for companies that need to generate a large amount of text, thanks to the scaling effect.

If you need to create a large number of texts, such as product or category descriptions, Data-to-Text software such as AX Semantics is suitable. This enables the generation of thousands of unique texts with consistent quality. In addition, SEO and quality standards can be implemented in a scalable way.

In case of a change, e.g. a modification of the manufacturer or product name, only a quick adjustment of the data is necessary and all texts are automatically updated. This saves a lot of effort and time.

With GPT software, on the other hand, each text must be checked and, if necessary, corrected. In addition, changes have to be made for each text individually, which is an unmanageable task for content teams that deal with many product descriptions. Therefore, automating content creation for large volumes of text is only advisable with Data-to-Text software, not with GPT software. In these cases, GPT software can only support or optimize existing editorial processes.

The following illustration lists the human and financial costs of GPT and data-to-text software by use case, highlighting the benefits of scale achieved with AX Semantics:

5. Conclusion

Both GPT and Data-to-Text software are excellent tools for companies looking to automate their content creation process. But which software is best depends on the individual use case. For a small use case, GPT software has the edge in terms of cost effectiveness, but it doesn't offer the benefit of scalability. This is because after the initial setup, AX Semantics is the less expensive option after a few weeks, at around 13 Euros per product description.

Due to the scaling effect, AX Semantics is particularly suitable for larger text requirements. This effect is particularly evident in the costs and personnel for use cases with large text requirements, where the price per product description is reduced to 5 cents. The cost per product description is very low compared to the manual method of creating product descriptions. Data-to-text is profitable compared to GPT models, where the effort remains constant across use cases and there are no scaling effects.

With GPT tools, scaling the text generation process is not possible. The texts can only be optimized manually. If there is a high demand for texts, it is hardly worthwhile to use these tools, because the timely input and especially the monitoring of the text output requires a lot of time and manpower, and thus costs money. With a large volume of text, this quickly becomes a daunting task that either requires the hiring of many new employees or, if not reviewed, carries the risk of errors. If product details change later on, e.g. the brand name or certain components, this information has to be entered manually for each text.

With Data-to-Text, this is not the case. Thanks to the centralized management, a minor adjustment of the data is enough to automatically update all concerning texts. In addition, the scalability of the text creation process provides the benefits of consistency, quality, as well as time and cost savings. So with AX Semantics, companies can save time and money while creating unique, high-quality product descriptions.

*All software prices, costs and assumptions in the sample calculation are valid as of March 27, 2023.

FAQ

GPT stands for Generative Pre-trained Transformer and is a model that uses deep learning to produce human-like language. The NLP (natural language processing) architecture was developed by OpenAI. GPT uses a large corpus of data to generate human-like language representations. It is a language model that learns from existing text and can provide different ways to end a sentence. It has been trained on hundreds of billions of words, representing a significant portion of the Internet - including the entire corpus of the English Wikipedia, countless books, and a dizzying number of web pages.

Data-to-Text refers to the automated generation of natural language text based on structured data. Structured data are attributes that are available, for example, in the form of tables. The users have full control over the text result, as they can intervene in the text generation process at any time and make updates or adjustments. This control ensures text consistency, meaningfulness and quality. Texts are also customizable and scalable. This means that hundreds of texts on products with variable details can be created in a matter of moments.

In short, data-to-text is used in e-commerce, the financial and pharmaceutical sector, in media, and publishing.

GPT-3 can be helpful for brainstorming and finding inspiration, for example when the user is suffering from writer's block. Using GPT-3 in chatbots to answer recurring customer queries is also very useful, as having humans generate the text output is inefficient and impractical. For more in-depth information, download our one-pager on Data-to-Text vs. (Chat)GPT.

The best content generator is the one that quickly writes original content without errors and inaccuracies. In this case, data-to-text programs are better than GPT platforms.

But if you want a tool that gives you exciting content ideas, you should try an article generator based on GPT. Once it has generated an article, you can scan it to detect any errors and make the necessary corrections.