Natural Language Generation (NLG) | Data-to-Text Automation | GPT + RPA + DeepL

GPT-3 or Data-to-Text: Which Text Generator Is Right For You?

Reading Time 13 mins | January 18, 2022 | Written by: Anne Geyer

Machine content creation, the Natural Language Generation (NLG), is considered to be one of the most future-oriented technologies.

This is not only due to the ever-growing importance of online commerce and the associated volume of content that has to be generated, like product descriptions, for example. It is also a helpful tool for copywriters and content managers, i.e. anyone who is involved in writing any kind of content.

In this context, two different types of text generators are very clearly distinguishable: GPT and Data-to-Text.

The Two Technologies: GPT and Data-to-Text

Generative Pre-trained Transformer (GPT) is a language production system that uses Deep Learning to create content.

Data-to-Text refers to machine automated production of natural language content based on data.

But what exactly can these two technologies do? In what ways do they differ from each other? Should copywriters and content managers be afraid that their jobs will be replaced by this type of artificial intelligence?

This article presents both technologies, describes their functionality, and highlights their strengths and weaknesses.

GPT-3 and Data-to-Text: Capabilities and Differences

Data-to-Text Technologies: Definition

Data-to-text technologies are a subfield in the artificial intelligence sector. These programs analyze structured data and generate ready-to-use natural language content from this data.

There are multiple natural language generation tools that differ in sub-areas, as well as in price. One company that distinguishes itself through the enormous amount of supported languages for the automated content generation is AX Semantics from Stuttgart, Germany.

What Is Data-to-Text Being Used?

Data-to-text software is used by all types and sizes of companies.

Among others, these are banks as well as companies from the financial sector, the pharmaceutical sector, the media and publishing sector along with companies within the broad field of e-commerce.

Data-to-text technologies are of tremendous help whenever large amounts of content are to be created based on structured data sets. This technology comes into play when similar content with variable details or content based on data or on statistics are to be created.

The following examples are worth mentioning:

- Generation of product descriptions and category descriptions in e-commerce

- Reporting in pharmaceuticals/health, finance and accounting field

- Creation of landing pages following SEO criteria

- Generation of personalized customer targeting through content personalization

- Reporting for sports or weather news

- Stock market updates and election results

- Offer descriptions in the tourism sector and property descriptions for the real estate industry

How Does Data-to-Text Work?

To ensure the functionality of data-to-text programs, structured data is required as a basis.

For generating finished content out of this data, the user configures the rules and logics in the software. In this way, the relevant information is extracted from the data set and translated into natural language content. Variances may also be inserted. This leads to an unchanged sentence structure, but to small variables in the sentence. Therefore, unique content is generated and duplicate content significantly reduced.

| Data-to-text software products differ from GPT in the way that they are simply not trained with online content. Instead they use data stored in CRM or PIM systems, for example, as well as in Excel, CSV, and JSON files. This data is directly imported into the software or transferred via an API. |

| GPT-3, however, generates content entirely on its own, with no further intervention from a human. The neural network alone generates an output from the given input. This can cause the already mentioned problems. |

Regarding the variety of languages, Data-to-Text also has more options than GPT-3. Whereas GPT-3 is mainly trained in English, Data-to-Text providers support multiple languages. The AX Semantics software, in particular, supports more than 110 languages.

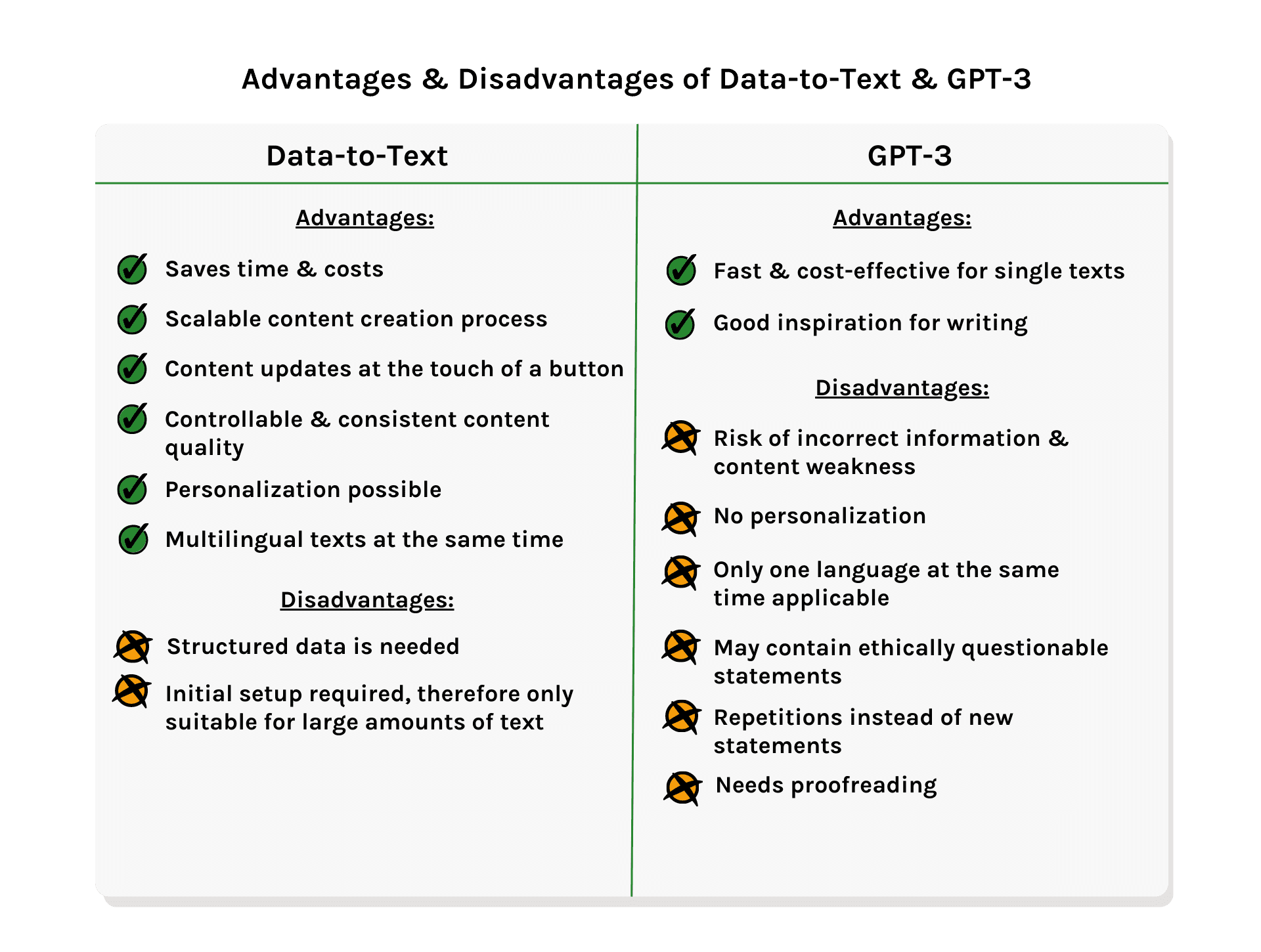

The Advantages of Data-to-Text: Speed & Control

Properly used, data-to-text can save an incredible amount of time and costs. Simultaneously, it can scale the content creation process.

Writing large amounts of content, e.g. thousands of product descriptions written for an online store, is almost an impossible task for copywriters. Certainly not if the content has to regularly be reviewed and kept up-to-date due to seasonal influences, for example.

However, with Data-to-Text software, this is possible. As soon as the project is set up, all that is needed is an update of the data. Then, with just one click, already existing content is immediately updated, or unique new content is created. As a result, copywriters and editors can spend more time on creative or conceptual work.

In contrast to GPT-3, the advantage of automated content generation using data-to-text is control. False statements made by the system or other unwanted statements can thus be ruled out. There are different possibilities available when using Data-to-Text technologies. With GPT-3, however, it is not possible to directly influence what is delivered as an output.

The Drawbacks of Data-to-Text: Data Dependency & Time Consumption

Data-to-text based automated content creation also has its limits. Once the data quality is poor or the availability of high-quality data is not ensured, the output can be of low-quality.

The technology is based on structured data in machine-readable form. Therefore, storytelling, as well as writing blog posts or social media posts, are reserved for humans. After all, these cannot be meaningfully generated through data-to-text software.

Thus, the generated content can only express the context of what appears in the data or what is derived from it.

Additionally, a certain amount of time is often invested in obtaining and cleaning the data that the AI needs as a basis. Together with the configuration of the software's rules and regulations, this can involve a greater or lesser amount of time and effort.

Apart from the Data-to-Text technology, other technologies for automated generation of content are available. GPT is one of them.

What can GPT exactly do? How is it different from the technology behind Data-to-Text?

GPT: Definition & Background

GPT refers to a set of Large Language Models (LLM) that uses Deep Learning to process or generate natural language.

The company behind GPT and its creation in 2018 is OpenAI, an organization that released the first two versions for free and as open source.

At first, the beta phase of the third generation was also available free of charge. However, when this ended, GPT-3 required a fee. The non-profit organization thus became a commercially operating company.

Now, after a $1 billion investment in OpenAI, Microsoft owns exclusive licensing rights over GPT-3, which means that OpenAI continues to offer its public API and allows select users to send in and get output from content to GPT-3 or other models of OpenAI. Access to GPT-3's underlying code will only be granted to Microsoft, allowing the company to embed, repurpose and modify the model as it sees fit.

What Can GPT-3 Do And How Does GPT Work?

GPT-3 is a language technology that is trained with a huge volume of content from the internet and, among others, is able to:

- Compose English content

- Conduct dialogs

- Answer questions

- Create programming codes

- Design website templates

- Fill in tables

How Does GPT Work Exactly?

GPT-3 is a speech prediction model. That means that it has a machine learning model in the form of a neural network. This can transform an input into what is predicted to be the most useful result.

GPT-3 was trained with data from the Internet. Sources are Wikipedia, forums, web content and book databases. They all are the basis of the artificial intelligence behind the model.

Its output is provided on the basis of the patterns that the model recognizes therefrom. When a user gives a text input, the system analyzes the speech and uses text prediction to generate the most likely output.

The content provided by GPT-3 is of high-quality and sometimes difficult to be distinguished from human-written content.

The Advantages of GPT-3: Fast & Inexpensive

The possibilities for using GPT-3 are numerous. The advantages of this particular technology are the fast, inexpensive and automatic generation of content in large volumes.

In other words, it is used in cases where a large amount of content needs to be generated automatically on the basis of a small amount of content. Or, in situations where it is not efficient or practical to let the text be created by a human. One example is a chatbot answering recurrent customer queries.

The Drawbacks of GPT-3: No Control & Misinformation

Despite the impressive language capabilities of this technology, GPT-3 has enormous drawbacks when it comes to generating content.

| An Example: If one asks GPTto write an article about how absurd recycling is, it will do exactly that. In this case, GPT writes a contextually nonsensical text on why recycling is unnecessary. This is because the anchoring to general knowledge or text-to-text solutions are completely absent and cannot be added. |

As the model is supplied with data from the Internet, further problems arise:

- It takes over potentially racist or sexist remarks it may contain, allows bias or swear words to flow into the content, as well as generates false information.

- The same content may be repeated over and over again, especially in longer texts, rather than additional information being added.

- Representational bias can occur. That is because the websites used as a basis for the training only represent a part of the world. This leads to overrepresentation of some aspects and underrepresentation of others.

Ultimately, this means the generated content should not be posted without proofreading and intensive fact-checking.

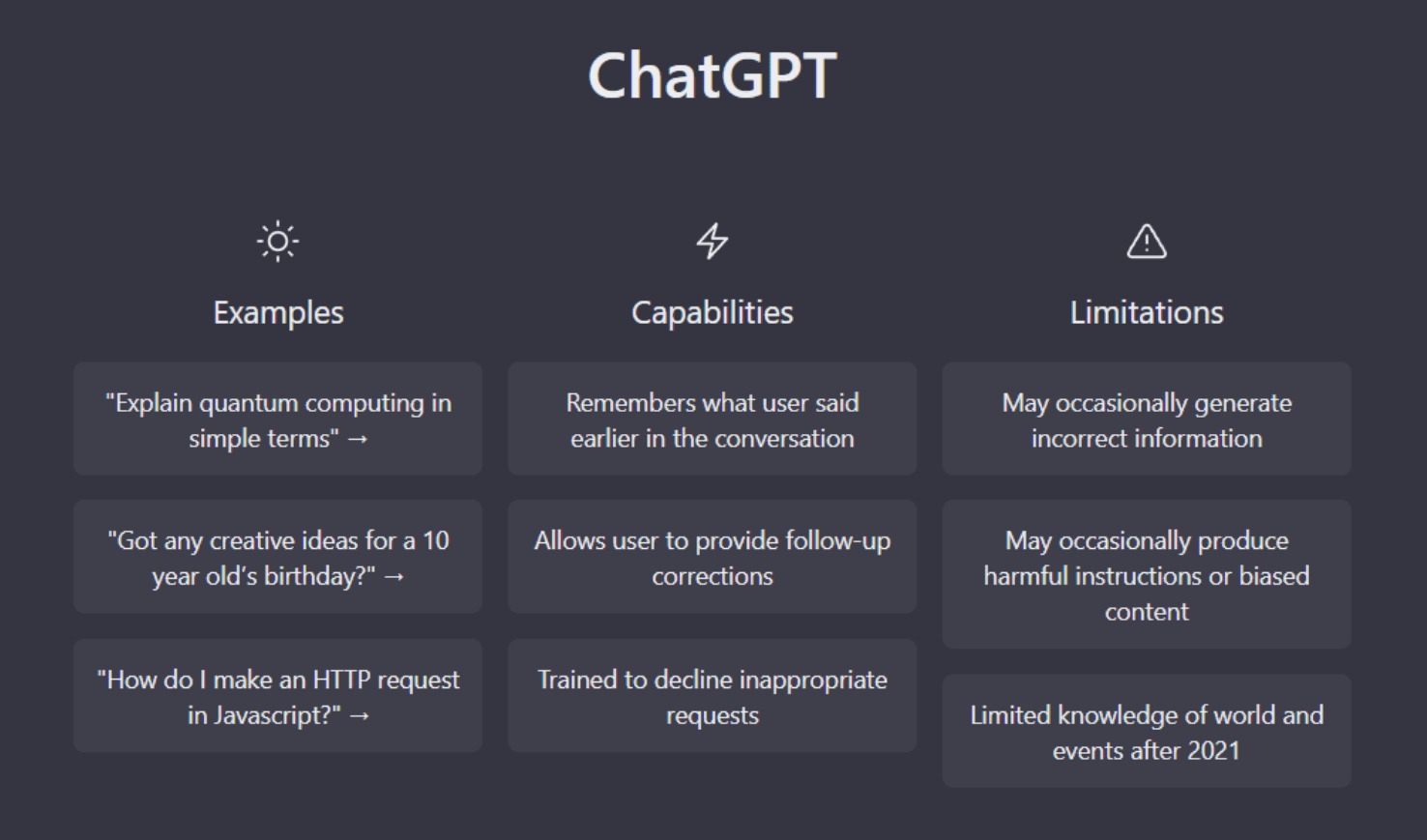

What Is ChatGPT?

GPT-3 was used as a basis to build the NLP model ChatGPT, which is capable of understanding and generating human-like conversations in real time. We will take a closer look at it in the following sections.

ChatGPT is an AI-chatbot. It’s designed to generate natural and human-like conversation responses in real-time, and is able to do so in many different languages – one at a time. ChatGPT stems from a model in the GPT-3.5 series and is described by OpenAI as a “fine-tuned” version of it.

It was designed to give users quick, precise, and helpful answers to their questions. So its main purpose is to respond to text questions in an informative or entertaining way. ChatGPT has been trained until early 2022. That means, it can generate texts about events and developments up until this point in time.

What Is The Use Of ChatGPT?

ChatGPT can be used for various conversational tasks, ranging from customer service to online dialogue recommendation. It can be used to build virtual assistants and chatbots that can generate natural conversations with humans.

It also understands and writes code in different programming languages. Therefore, the chatbot can be used for debugging codes, and can explain it as well as help to improve it. In a more general sense, ChatGPT is great at explaining complex things and issues in simple and easy to understand words.

The "deep lesson" has to do with how we collectively design information access systems, and our choices in this moment. Do we lean in to #AIhype or do we level up info hygiene? Do we accept inevitability narratives about centralized control of info systems, or do we resist? pic.twitter.com/os1ahgt9HZ

— @emilymbender@dair-community.social on Mastodon (@emilymbender) January 15, 2023

The Limitations Of ChatGPT

As the hype over ChatGPT has been enormous, it also has its weaknesses. For example, the language model can give plausible-sounding, but incorrect or nonsensical answers. Therefore, it is strongly recommended to fact check ChatGPTs answers. And it's not likely that this issue will be fixed any time soon. As OpenAI writes on their website:

“Fixing this issue is challenging, as: (1) during RL training, there’s currently no source of truth; (2) training the model to be more cautious causes it to decline questions that it can answer correctly; and (3) supervised training misleads the model because the ideal answer depends on what the model knows, rather than what the human demonstrator knows.”

Open AI

This is why, at least in some cases, answers can vary according to the way users formulate questions or their input phrases. This is also true, when the user gives an ambiguous inquiry. Instead of asking for clarification, ChatGPT will guess the users' intent behind the question and answer accordingly. This is very well shown in this example:

THE 🔑 misunderstanding of chatGPT: when it tells us that the very much alive Noam Chomsky died in 2020, it’s not “drawing on sources.”

— Gary Marcus (@GaryMarcus) December 8, 2022

It’s drawing on bits of text that were never previously connected & confabulating a relationship between those bits—without checking the data pic.twitter.com/dweDO5bavB

Conclusion

Both GPT-3 and Data-to-Text have their place as AI-powered content generation technologies. They both help in specific ways and under different conditions within the creation of different types of content, e.g. the writing of continuous texts or the creation of product descriptions.

One thing is clear: AI can not replace humans in their role as thinking beings - instead, it acts as a supporting measure and thus makes things easier for the user. With its help, copywriters, editors and content managers are eased of their workload and can concentrate on other tasks.

Therefore, and because the demand for written content is steadily increasing, both language technologies will become more and more important in the future.

Background information about automated content generation

An AI content generator is a tool that can help you create various types of content. That includes articles, product descriptions, and reports. This can be a great way to save time and effort when creating new content and ensure that your content is of high quality.

Automated content uses artificial intelligence and automated processes to generate different types of copies. As a user you need to configure the rules and logic of how the data should appear in the texts—you only need to configure the rules once in the beginning and the tool applies these for thousands of texts that are generated. Depending on the AI tools you choose, you can automatically create whole articles or shorter copies like product descriptions and social share texts.

Natural Language Generation (NLG) refers to the automated generation of natural language by a machine. As a subfomain of computational linguistics, the generation of content is a special form of artificial intelligence. Natural language generation is used in many sectors and for many purposes, such as e-commerce, financial services, and pharmacy sector. It is seen to be most effective to automate repetitive and time-intensive writing tasks like product descriptions, reports or personalized content.

In short, data-to-text is used in e-commerce, the financial and pharmaceutical sector, in media, and publishing. GPT-3 can be helpful for brainstorming and finding inspiration, for example when the user is suffering from writer's block. Using GPT-3 in chatbots to answer recurring customer queries is also very useful, as having humans generate the text output is inefficient and impractical. For more in-depth information, download our one-pager on Data-to-Text vs. (Chat)GPT.